This is the first part of a two-part essay, which I originally presented at conferences in the spring of 2014. Part two will be posted tomorrow. The full version of the essay, which I’m happy to share with anyone interested, included a section on the place of innovation speak in the academic sub-discipline of business history.

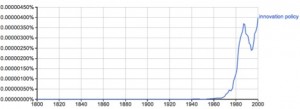

Use of the word “innovation” began rising in the mid-1940s, and it’s never stopped since. At times, its growth has been exponential; at others, merely linear; but we hear the word today more than ever.

A Google Ngram for the word “innovation” from 1800 to 2000

This curve has consequences. It results in concrete stories like this one: a few years ago, a friend of mine was teaching a course that touched on science and technology policy. One of his class sessions that semester fell on the day of President Barack Obama’s State of the Union Address. Somehow the topic of innovation came up during class discussion. My friend joked that the students should pay attention to how many times the President used the word “innovation.” Perhaps, he said, they should use it as the basis of a drinking game: take a sip every time Obama utters the word. The students chuckled. The class moved on. That night, my friend watched the State of the Union Address himself. Part of the way into it, as the word innovation flew from the President’s mouth again and again, my friend was suddenly overcome with fear. What if his students had taken him seriously? What if they decided to use shots of hard liquor in their game instead of merely sipping something less alcoholic? He had anxious visions of his students getting alcohol poisoning from playing The Innovation Drinking Game and himself being fingered for it by the other students. Because the President had used the word so very often, my friend went to bed wondering if his earlier misplaced joke would jeopardize his tenure. Such is life in the era of innovation.

Of course, the rise of innovation speak has greater consequences than college drinking games and the worries of tenure-track assistant professors, or it would hardly be worth considering. Innovation speak has come to guide social action, or at least structure desirable actions for some. These trends look much more dramatic if you consider terms and phrases, such as “innovation policy,” which virtually came out of nowhere in the mid-1970s.

A Google Ngram for the word “innovation policy” from 1800 to 2000

Terms like innovation policy move us beyond an earlier moment where scholars and science and technology policy wonks were simply noting innovation as a process that happened in the world. The point became that innovation could be fostered, manipulated, instrumentalized. Policy-makers could take actions that would increase innovative activity, and, therefore, it became important to learn what factors gave rise to innovation. What are the “sources of innovation”? (Von Hippel) What kind of national and regional “systems” fostered its growth? (Lundvall, Freeman, Nelson) How could managers harness rather than be destroyed by the “gales of creative destruction”? (Christensen) How could localities learn from successful hubs of innovative activity, most paradigmatically during this period, Silicon Valley? (Porter) Many academic disciplines entered this growing space, searching for the roots of innovation, hoping to capture it in a bottle. Historians were recruited into this effort, since they can supposedly draw lessons from the past about innovation.

Yet, ironies appear. During this same period—the moment of high innovation speak—economic inequality has dramatically risen in the United States as have prison populations. Middle-class wages have more or less stagnated, while executive salaries have skyrocketed. The United States’ life expectancy, while rising, has not kept pace with other nations, and its infant mortality rate has declined relative to other countries since 1980. It has also fallen behind in most measures of education. One could go on with statistics like this for a long time. Put most severely, during the era of innovation speak, we have become a worse people. This claim is not causal. Certainly many other complicated factors have contributed to these sad statistics, but it is also not clear that thinking about innovation policy, which has taken so much time and energy of so many bright people, has done much to alleviate our problems. And some actions done in the name of innovation and entrepreneurship, like building science parks, probably do little more than give money to the highly educated and fairly well-to-do.

There are still questions to ask, however, even if the link between innovation speak and certain forms of decline is not causal. What has driven the adoption of innovation speak? Why have academics glommed on to this idea when things around them were falling apart? What have they missed by focusing on innovation and its related topics? How do they justify working on innovation when so much else is wrong in our world?

When we cast our eyes on academics using the I-Word what we see are certain habitual patterns of thought, the reliance on specific concepts and metaphors, and a dependence on questions related to those concepts. Let me give one example: The sociologist Fred Block has co-edited a volume, titled State of Innovation: The U.S. Government’s Role in Technology Development. He also wrote the introductory essay for it. In the opening of that essay, Block rehearses three well-known problems—the trade deficit, global climate change, and unemployment—that President Barack Obama faced as he entered office. Then Block writes, “For all three of these reasons, the new administration has strongly emphasized strengthening the U.S. economy’s capacity for innovation.” From one perspective, Block’s statement makes perfect sense. From another point of view, when taking into account all of the problems listed above, the statement seems slightly crazed. Why would innovation policy be an answer to anything but the most superficial of these issues? Consider all of the assumptions and patterns of thought and life that must be in place for Block’s words to seem like common sense.

The goal for academic analysis must be to uncover these assumptions and explain their historical genesis. In striving for this objective, we have a great deal of help because this is precisely the kind of work that anthropologists, cultural historians, scholars of cultural studies, and critics of ideology have been doing for generations. We also know of historical analogies that share structures with innovation speak. For instance, over fifty years ago, Samuel P. Hays published his book, Conservation and the Gospel of Efficiency: The Progressive Conservation Movement, 1890–1920. Hays explained the notion of efficiency’s rise to prominence among certain groups of actors during the period he investigated. Ponder then how the utterances of a specific actor fit into this overall trend. After World War I, in a moment slightly after the period Hays’ book covers, Herbert Hoover took over as Secretary of Commerce and used the position to push an agenda of efficiency. As Rex Cochrane writes in his official history of the National Bureau of Standards, a division in the Commerce Department, “Recalling the scene of widespread unrest and unemployment as he took office, Hoover was later to say: ‘There was no special outstanding industrial revolution in sight. We had to make one.’ His prescription for the recovery of industry ‘from [its] war deterioration’ was through ‘elimination of waste and increasing the efficiency of our commercial and industrial system all along the line.’” Hoover’s formulation of the hopes of efficiency so resemble Block’s, on the hopes of innovation. Thanks to Hays and others, we now recognize the ideological status of Hoover’s words. Although Hoover may have believed that he originated his own thoughts, we can see them as part of a larger trend. Moreover, at least to the degree that efficiency formed the basis of Frederick Winslow Taylor’s “scientific management” and other cultural products of the era, we know that efficiency was often a mixed blessing. Its value depended on who you were. Managers loved efficiency; workers loathed it. The same often holds true for innovation.

The goal of this essay is to offer a first take on the rise of innovation speak. A classic question in the literature on the Progressive Era, to which Hays’ book was a major contribution, was, “who were the progressives?” I cannot yet describe any clear “innovation movement” as Hays described a conservation movement. It will take more time and perhaps a longer historical perspective to answer the question, “who were the innovation speakers?” My aims here are humbler. As I have argued elsewhere, many incentives drive people to use the word “innovation.” In a review of Philip Mirowski’s book, Science-Mart, I wrote, “In the second chapter, “The ‘Economics of Science’ as Repeat Offender,” Mirowski lays much of the blame for our current state at the feet of economists of science, technology, and innovation. Mirowski is right to go after economists as the chief theorists of our moment. ‘Innovation’ has become the watchword, and public policy has congealed around fostering it as the primary source of economic growth. But Mirowski goes too far. Many share responsibility for our current myopic focus on innovation as the key to societal improvement, including politicians reaching for appealing rhetoric and numerous groups opportunistically looking for handouts (e.g., university administrators; academic and industry scientists and engineers; firms, from large corporations to small startups; bureaucrats in government labs).” If this is right, then the goal must be to examine why different groups have taken to innovation speak. For example, academic researchers live not in the land of milk and honey but in the land of grants and soft money and, to win treasure, are bidden to speak of innovation, particularly by the National Institutes of Health, which requires a whole section on the I-word in its proposals.

This essay moves through three sections. This introduction and the first section will be published today; the second and third sections, on Wednesday. The first section examines some general drivers that have led to an increased focus on innovation in US culture. In the second section, I narrow my focus to the world of academia and the rise of innovation speak in that sphere. This rise tracks with the drivers described in the first section, in part because academics acted as advisers to government during the general problems that increased focus on innovation. Economists, as Philip Mirowski has described, played a major role here, but I will also focus on the business school as a core mediator between academics and non-academics on the topic of innovation. Finally, in the third and concluding section, I will argue that, for both moral and social scientific reasons, we should give up the word “innovation.”

Drivers

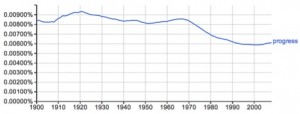

Several factors drove the rise of innovation speak, including some long, deep trends in Western Civilization. For instance, the word “progress” had crashed upon the shoals of the late-1960s and early-1970s: a triumvirate of factors typically remembered as the Viet Nam war, Watergate, and perceptions of environmental crisis. This growing skepticism included doubt that technology would inevitably lead to a better future. Innovation became a kind of stand-in for progress. Innovation had two faces when it came to this matter, however. On the one hand, in its vaguest sense, the sense often used in political speeches and State of the Union Addresses, innovation means little more than “better.” In these instances, innovation is as close to progress as one could come without saying the word “progress.” On the other hand, in its more technical definitions, “innovation” lacked progress’s sense of “social justice” or the betterment of all. Innovation need not serve the interests of all, in fact, typically it doesn’t.

A Google Ngram for the word “progress” from 1900 to 2000 shows a decline in the word’s usage beginning—no surprises—in the late 1960s

These two faces of innovation allowed certain kind of rhetorical slipperiness on the part of speakers. They could use it to mean progress but, when pushed, retreat to a technical definition. This slipperiness also meant that innovation was catholic politically. Both liberals and conservatives felt innovation’s pull. The term was vague enough that no one needed to feel as if it conflicted with his or her beliefs.

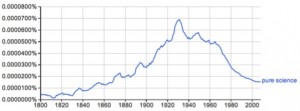

Another factor related to a long trend was changes in thinking about science and technology during this period. The pure science ideal that emerged in the 19th century began faltering in the 1970s and collapsed almost completely in 1980 with the Diamond v. Chakrabarty decision, which ruled that genetically engineered organisms could be patented, and the passage of the Bayh-Dole Act, which allowed recipients of federal money to patent their inventions. These events signaled and pushed forward deep changes in the nature of scientific practice in the United States. In the early 20th century, scientists were blacklisted if they went to work for industry. Beginning in the 1980s, academic scientists weren’t hip unless they had a startup or two. One result of these changes was the death of the so-called Mertonian norms: communalism (or the sharing of all information), universalism (or the ability of anyone to participate in science regardless of personal identity), disinterestedness (or the willingness to suspend personal interests for the sake of the scientific enterprise), and organized skepticism. Scholars in science and technology studies have shown again and again that science never lived up to these norms, that secrecy and other counter-norms were just as prevalent in ordinary scientific practice as the ones Merton identified. In the late 20th century, however, these norms no longer functioned even as ideals. How can you aspire to communism when you are striving for patents and the commercialization of proprietary knowledge?

A Google Ngram for the exact phrase “pure science” from 1800 to 2000

In a series of recent essays, the historian of science, Paul Forman, has tried to characterize the shifts inherent in this post-Mertonian, postmodern moment. In one formulation, Forman argues that, before the 1970s, science took precedent over technology, both because it was believed to precede it (science gave rise to technology) and because it held higher prestige. The intellectual portion of this priority was best captured in the so-called “linear model,” which asserted that scientific discoveries led to technological change. During the postmodern moment, however, the relationship between science and technology inverted, so that practical outcomes—and one might add, profit—became the foremost goal, or so Forman argues. These changes are easily perceivable in recent discussions about and fretting over STEM, or science, technology, engineering, and math, in debates about secondary and higher education in the United States. While the term ostensibly includes science, it isn’t science in the sense of knowledge-for-knowledge’s sake. It is almost always science that is actionable, useable, commercially-viable, science that will make the nation globally competitive. And this focus on competition brings us to our next factor.

So far, I have focused on two factors—the death of progress and shifts in scientific ideals—that were related to long trends in the history of Western culture, but there were more immediate causes of the rise of innovation talk. Perhaps most important among them was the stagflation and declining industrial vitality that marked the 1970s. Of course, this downturn was itself related to longer trends, including the period of perceived industrial expansion that, excepting the Great Depression, had begun in the late 19th century. The downturn seemed dire in the context of the post-War economic boom of the 1950s and early 1960s. Policy-makers worried aloud about the health of industry, and members of the Carter administration discussed the adoption of an “industrial policy” to spur on “sick industries” and halt the country’s slide into obsolescence.

The terms “innovation policy” and “industrial policy” shot up around the same time, but industrial policy faltered in the late 1980s and plateaued throughout the 1990s before sliding into disuse. There were some consequences of the victory of innovation policy over industrial policy. Industrial policy was broader than innovation policy. It included the whole sweep of industrial technologies, inputs, and outputs, while innovation policy placed heavy emphasis on the cutting-edge, the so-called “emerging technologies.” Industrial policy also included a focus on labor, while innovation policy typically doesn’t, unless it is to worry about the availability of knowledge workers, trained up in STEM, that is, unless it is labor as seen through the human resources paradigm.

The emerging innovation speakers were not content to focus on the economic recession at hand, however. Economic decline, for them, was much worse given that it was a decline relative to other nations. In other words, innovation speakers needed an other. And innovation speak, to this day, typically involves members of a global superpower worrying about its state in the world. It’s a worry of empire. In the 1980s, the other was Japan, whose managers and workers were making great strides because of some mysterious thing hitherto unknown in the West, because of something rooted in their culture. Focus on Japan developed in the context of a new discourse about “globalization,” a term whose use skyrocketed beginning in the late 1980s. Fear of Japan was expressed not just in academic tomes but also in popular culture, in movies and novels such as Gung Ho (1986) and Rising Sun (novel, 1992; film, 1993), but academic analysis of Japanese production techniques did become a cottage industry during this period. Analysts of Japan were often central contributors to the rise of innovation speak, at least amongst academics, as I will explore in the next section.

Ironically, Michael Crichton’s Rising Sun, the most vehemently anti-Japanese expression of American pop culture, came out a few years after Japan’s economy fell into crisis from which it has never fully recovered. But we have found other others, perhaps because we cannot live without them. In the early 1990s, the Federal Bureau of Investigations was already quizzing American scientists who went to professional conferences that also had Chinese scholars in attendance, and current anxieties about American innovativeness often mention China’s investments in science and technology. Talk of innovation is often talk about our relative position in the world. The same holds true of current worries about STEM education. STEM is almost always worry about economic competitiveness, not about the beauties of science. As the physicist, Brian Greene, explained on the radio program, On Being, “The urgency to fund STEM education largely comes from this fear of America falling behind, of America not being prepared. And, sure, I mean, that’s a good motivation. But it certainly doesn’t tell the full story by any means. Because we who go into science generally don’t do it in order that America will be prepared for the future, right? We go into it because we’re captivated by the ideas.” Technology trumps idle curiosity.